Pyside2 Gui Example.

Implementation in Python. Here we will create a generalized solution so that it can used with any no. Of slot machines,ads,etc and can give output for best machine using UCB. Software Architecture & Python Projects for $10 - $30. We will provide the python library required to engineer features. We have a dataset that will be sent less than 800 row and less than 10 features. Please ping us and we can send you the dataset.

Reinforcement learning has yet to reach the hype levels of its Supervised and Unsupervised learning cousins. Albeit, it is an exceptionally powerful approach aimed to solve a variety of problems in a completely different way. To learn reinforcement learning, it is best to start from its building blocks and progress from there. In this article, we will go through a Multi-Armed Bandit using Python to solve a business problem.

What are Multi-Armed Bandits?

Imagine you have 3 slot machines and you are given a set of rounds. In each round, you have to choose 1 slot machine and pull its arm and receive the reward (or no at all) from that slot machine. Then, you do it again, and again… Eventually, you figure out which slot machine gives you the most rewards and keep pulling it for each round.

This is the Multi-Armed Bandit problem also known as the k-Armed Bandit problem. K is the number of slot machines available and your reinforcement learning algorithm needs to figure out which slot machine to pull in order to maximize its rewards.

Multi-Armed bandits are a classical reinforcement learning example and it clearly exemplifies a well-known dilemma in reinforcement learning called the exploration-exploitation trade-off dilemma.

Keep in mind these important concepts. I will cover them in more detail in posts coming up. For now, let’s apply Multi-Armed bandits to a business problem.

Our Business Problem

We will apply the Multi-Armed Bandit method to a theoretical marketing business problem.

You are the Chief Marketing Officer promoting a new product and need to get the word out. To do this, you will be texting your target customers a message. Upon receiving the message, the customer will react one way or the other and generate a reward that you have access to.

You have 4 text messages to send but are not sure which one will work best.

Upper-Confidence-Bound Action Selection Formula

How do you choose the best action? Going back to my example in which you had to choose a slot machine at each round. Particularly at the beginning, you had to test them out correct? Either stick to one from the start (not the best option) or try a couple of them to find out the best one.

A common strategy is called the Upper-Confidence-Bound Action selection, in short, UCB.

If you are an optimist, you will like this one! It’s strategy is :

Optimism in the face of uncertainty.

This method selects the action according to its potential, captured in the Upper-Confidence interval. Balancing this out with how uncertain you are of its measurement.

The UCB formula is the following:

( A_{t} doteq argmax_{a} left [ Q_{t}(a) + csqrtfrac{lnt}{N_{t}(a)} right ] )

- t = the time (or round) we are currently at

- a = action selected (in our case the message chosen)

- Nt(a) = number of times action a was selected prior to the time t

- Qt(a) = average reward of action a prior to the time t

- c = a number greater than 0 that controls the degree of exploration

- ln t = natural logarithm of t

The idea behind this algorithm is that the value of the square root indicates the uncertainty of the value of action a.

If an action is not chosen, t increases Nt(a) does not which will make the uncertainty of that action to increase, increasing its chances of being chosen (exploration).

If an action is chosen both numerator increases. The numerators increase get smaller through time (due to the natural log) but the denominator does not, causing the uncertainty to decrease.

The value with the maximum UCB gets chosen at each round! Let’s go through an example of how to implement UCB in Python.

Multi-Armed Bandit – Generate Data

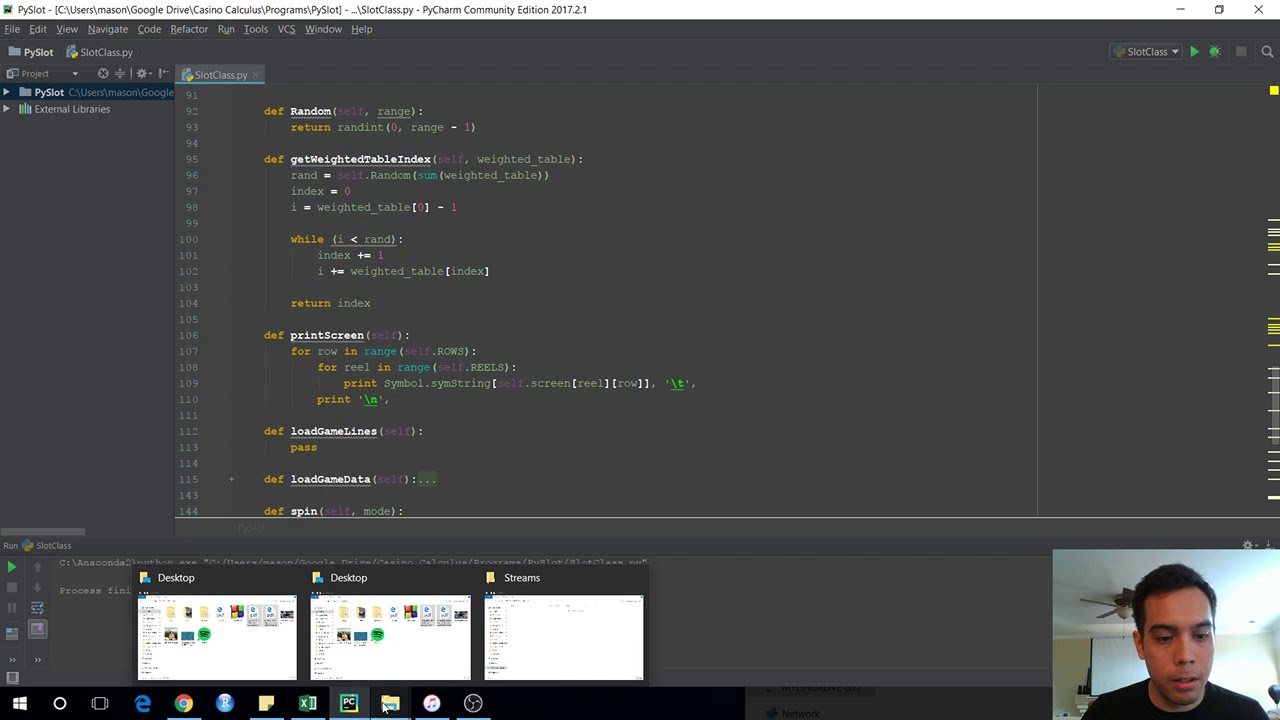

Let us begin implementing this classical reinforcement learning problem using python. As always, import the required libraries first.

The last line above is only needed if you are using a jupyter notebook for the implementation.

Next up, we will generate our dataset consisting of 5 columns. The first is the user we sent SMS messages to followed by m1 through m4 which holds the message that was sent. The value is the rewards for each combination of message and user. Each follows a normal distribution with a mean between 95 and 105 and a standard deviation of 5 or 10. We generate 10,000 samples.

You can see our dataset below.

To get a better sense of our average rewards for each message, let’s visualize the above dataset in a box plot.

Looking at the box-plot above, if you had to pick the best message which one would you choose?…. It would have to be m2 of course!

Do you think our multi-armed bandit algorithm will pick this up? We will find out soon.

Multi-Armed Bandit – UCB Method

In order to solve our Multi-Armed bandit problem using the Upper-Confidence Bound selection method, we need to iterate through each round, take an action (select and send a message), see its returns and pick again. Eventually, we will be selecting the best message.

To implement UCB in python first initialize our variables. Each is commented on below to aid in your understanding. These will help us solve the UCB formula previously shown.

Next, we will loop through each round represented by the variable t. At each round, the UCB_Values array will hold the UCB values as of that round and these get set to 0. Then we loop through each possible action. If an action has never been selected, it gets chosen. Otherwise, we calculate the UCB value for each action and store it in the UCB_Values array.

At the end of each round, the action containing the maximum UCB Value gets selected. The numpy argmax function returns the index of the maximum value in the array. This is stored in the action_selected variable.

The last block of code below, under “update Values as of round t” updates the values of our important variables with the information our system has seen thus far.

To allow us to perform additional analysis, I have added this additional piece to our code after “sum_rewards[action_selected] += reward”.

We store values at each round corresponding to the reward we would have received by choosing a message at random in each round. Obviously, we aim to do better than that through the UCB algorithm.

Let us first see if our method performs better than choosing an action randomly.

Indeed it performs much better than selecting an action randomly!

Our variable, Nt_a, holds the number of times each action was selected. We said previously that for this exercise, M2 would be the better one as it’s distribution has the highest average. Plot each action and the number of times each was selected.

What do you think? Just as expected!

Conclusion

We have now implemented the Upper-Confidence Bound Selection method to solve a classical reinforcement learning algorithm, the Multi-Armed Bandits. UCB is widely used to solve digital marketing problems. However, there exist other methods to solve these K-Armed bandits such as epsilon greedy and Thompson Sampling. Hope you enjoyed this article, stay tuned for more!

AB test: from burying point to abandoning treatment

The first step of AB test abandonment was as follows

Whether we use the frequency school or the Bayesian school, we need to make decisions or go through the whole process of AB testing. However, many times, the opportunity cost of using ab test to make all decisions is too high, the labor cost is too high (data scientists are too expensive), the loss caused by poor version and other reasons make the use of AB test data-driven become a slogan.

The second step of AB test abandonment was as follows

Even if a developer is determined to take the road of using ab test as data-driven, it is too expensive to build an own AB test platform, while using the third-party AB test service lacks flexible data analysis ability.

If there is no embedded point for an event, the only way to do ab testing is to redistribute the SDK. When the SDK has not reached a certain coverage rate, there is still no way to do ab testing. Therefore, using ab testing to do product iteration is postponed until it is forgotten. AB test abandoned treatment.

AB test abandonment step 3:

Even if a developer uses the statistical SDK of Youmeng +, he scientifically makes user-defined embedding points, scientifically divides users, estimates the number of samples, correctly collects data, and correctly conducts AB test, and then finds that there is no difference between the two versions. Or sometimes even find the new version even worse (cue, take the example of Facebook, which is used badly).

As an operator, how do you report your negative results to the boss? How do you decide to change the version as a big guy in a technical team. AB test abandoned treatment. I once asked a big guy, why is the AB test so mature and useful method not so popular in China? The big guy said: after every revision / operation activity, everyone is waiting to ask for credit. Who wants to see the results of data analysis?

In the process of doing AB testing many times, there are still big guys asking if there is an ab test algorithm with faster iteration speed? Is there a less strict AB test? During the operation scenario, the most frequently asked question is: how long does it take for you to do ab test in three days? Can you do ab test before operation? This kind of problem that hits the soul. After in-depth communication, the AB test requirements for this kind of problem are actually hoping to be able to reduce the risk of faster, automatic optimization scheme.

AB test therapy

Do we have any good ways to solve these problems? Of course, there are solutions. For the first and second step of AB test, the solution to the reason of abandoning treatment can only be to carry out scientific buried points to meet the main statistical needs first, because AB test is based on the statistical module. For ab test, the solution of the third step is multi armed bandits.

Multi armed bandits

Kivy Python Example

So what’s going on with this algorithm that automatically optimizes to find the best solution? How can this algorithm achieve faster, automatic selection of optimization schemes?

Zhang San in Las Vegas

Let’s tell a story about Zhang San’s gambling in Las Vegas (after all, statistics originated from gambling). One day, gambler Zhang San came to Las Vegas with his savings. He wanted to win the Las Vegas casinos with his black technology glasses and the recently researched bandits algorithm to become a gambler.

According to his years of gambling experience, the winning rate of each slot machine in the casino is different, but the winning rate of each slot machine will not change. According to the rumors in the river and the lake, there is a slot machine in this casino with a winning rate of more than 50%. His strategy is to find the slot machine with the largest winning rate.

So how can Zhang San find the biggest slot machine? One of the simplest strategies is to try every slot machine in the casino, calculate the winning rate of each slot machine, and then select the slot machine with the largest winning rate. This method is similar to ab test, which distributes the traffic evenly to many schemes.

One obvious drawback of this method is that the cost of trial and error is very high, and the slot machine with the largest winning rate can be found in the end. If we can find that some of the solutions may not be the best in the process of trying, then we will not waste time and energy on the second best plan, then can we find the best solution faster and spend less money? So the question is, how do we define which algorithm is better at finding the best solution?

What is calculated here is the difference between the winning amount of the best scheme minus the winning amount of bandits algorithm in exploring the best scheme.

Zhang San’s bandits algorithm

As a gambler, Zhang San naturally knows some bandits’ algorithms, so what strategies does he plan to use? What he learned from his master was epsilon green and upper bound confidence (UCB).

The algorithm of epsilon greedy is that the number of times of epsilon proportion is not the best scheme, and the number of times of 1-epsilon proportion selects the best scheme at present. Epsilon refers to the proportion that needs to be selected manually. For example, 10% of the time, the non current best scheme is selected, and 90% of the time, the current best scheme is selected.

However, there is an obvious problem with this method. The master told him before he left that the bandits method might fall into a local optimal solution. For a long time, there was no way to find the global optimal solution, that is, it might not be possible to find the slot machine with the highest winning rate. The master told him to use the bandits carefully.

So Zhang San decided to use the algorithm of UCB to bet. How did the algorithm of UCB be realized?

This is the score of each slot machine. The first item is the average winning rate of this slot machine. The second item is the bonus item related to the number of attempts, where t is the number of experiments currently conducted, and t is the number of experiments_ {ij} is the number of times the slot machine has been tried. There is also a coefficient before the second term bonus to adjust the influence of the bonus term.

After each experiment, the score of each slot machine was recalculated, and the slot machine with the highest score was selected for the next experiment. The bandits algorithm of UCB can find the best solution in a long enough time. Generally speaking, the algorithm of UCB is better than epsilon greedy under the definition of regret.

Li Si’s bandits algorithm

In other words, Zhang San has a senior brother who called Li Si. In his early years, he practiced Bayes Dafa under the master Bayes. One of the great advantages of Bayes is that it can make use of the achievements of other people’s practice, which is the prior distribution in Bayes.

Li Si watched Zhang San’s experiment on slot machines and recorded the winning rate of each slot machine. But Li Si can’t wait too long. When Zhang San finds out the slot machine with the biggest winning rate, he can’t rely on that slot machine to win money. So Li Si came to an end after he felt that he had accumulated enough data. He used the Thompson sampling method based on Bayes.

On the basis of Zhang San’s attempt, Li Si gave each slot machine a priori probability based on beta distribution, and then began to look for the slot machine with the largest winning rate. In each experiment, he took a random number based on the beta distribution, and then selected the slot machine with the largest random number for the experiment. When the slot machines accumulate more data, the variance of beta distribution is smaller, and the random number selected each time is closer to the mean value. When the slot machine accumulates less data, the variance of beta distribution is larger, and the random number selected each time will change.

Bandit algorithm of Master Zhang San and Wang Wu

Zhang San’s master actually came to Las Vegas early. Through internal intelligence, he knows that the winning rate of each slot machine will vary with many factors, such as whether it is a weekend or not, whether the person is a man or a woman, etc.

However, Zhang San’s and Li Si’s algorithms do not consider some other external factors. This kind of bandits algorithm considering other external factors is called contextual bandits. Master Zhang San uses linucb algorithm based on UCB algorithm + ridge expression.

If you want to know who is the quickest to find the legendary slot machine among Zhang San, Li Si and Wang Wu, please continue to look down.

When should bandits and ab tests be used?

Figure from VWO’s website

The main problem of bandits algorithm is how to find the best solution faster and with less loss. The figure above shows the optimization of traffic allocation in bandits’ search for the best solution. Bandits can find the best solution with minimum loss.

Why do we have to do ab test?

First of all, AB testing is mainly used to guide the important business decision / product version iteration, which may be affected by many indicators. Bandits can only be optimized based on a single index. Of course, multiple indicators can be superimposed into a composite index, but the optimization goal of bandits is a single index.

Secondly, AB test is mainly used to obtain the statistical significance of each version. So it’s more abstract, that is, you have spent time developing a new version. You need to be sure whether this version has the previous version, and what is the good about it? Is the retention improved or the user’s usage time increased.

These knowledge gains can be used in the iteration after the product, but bandits can’t help you to analyze and get the knowledge.

So when should we use the bandits algorithm?

When the problem you care about, like Zhang San, is only a single indicator of conversion rate, retention rate, etc., and you don’t care about the interpretation and analysis of data results. When your operation activity is only a few days or a day, you don’t have time to wait for the AB test to reach statistical significance. This is the faster AB test mentioned by some big men and app developers. What’s more, if you have some indicators that need to be optimized for a long time, and these indicators often change, then this is also an important application scenario of bandits.

The graph is from VWO’s blog

In a word, AB test is suitable for testing some changes with a long change period, and the acquired knowledge should have generalization ability. However, bandits algorithm is suitable for some optimization scenarios with fast change and short cycle, and the knowledge obtained may not have generalization ability.

Using bandits in Youmeng +

The u-push products of Youmeng + cover a large number of external users, and the push strategies of a large number of developers are very simple timing broadcast, and there is almost no personalized customized sending strategy (except for the headline). Even if developers want to optimize the sending time and content based on the existing tools, the existing tag and user behavior data accumulation will not be sufficient.

For the time being, the domestic business partners do not have this function, which is also because their data volume is far less than the data coverage of Youmeng +. However, many platforms for developer services in the United States, such as recombee, airship, leanplum and so on, not only optimize the sending time, but also implement full link closed-loop user activation and anti churn products based on user life cycle and other tags.

Our future work is to achieve this very user friendly product, and our starting point is to optimize the delivery time, that is, the function of leanplum. If we can send this message when users use the app or when they have a strong willingness to accept push messages, then users are more willing to open the message after reaching the user. In this way, a win-win situation of improving user experience and higher push click through rate is realized.

The time optimization scheme of Youmeng + is based on Thompson sampling method and uses beta distribution to score the granularity of user + app + period.

We found that collaborative filtering can improve the click times of users who have no clicks in the data, while Thompson sampling can better determine the optimal sending time of users who have clicks.

So how to combine collaborative filtering and Thompson sampling together to improve the user’s push experience and click through rate will be the direction of future exploration.

The end of the story

At the end of the story, Zhang San, Li Si and Wang Wu all lost their savings and left Las Vegas because they didn’t know the statistical principle of gambler’s run. This story tells us that we should stay away from gambling. Small gambling is not pleasant, and big gambling is even more harmful.